ProcessOne: ejabberd 22.05

A new ejabberd release is finally here! ejabberd 22.05 includes five months of work, 200 commits, including many improvements (MQTT, MUC, PubSub, …) and bug fixes.

– Improved MQTT, MUC, and ConverseJS integration

– New installers and container

– Support Erlang/OTP 25

When upgrading from the previous version please notice: there are minor changes in SQL schemas, the included rebar and reba … ⌘ Read more

GitHub enables the development of functional safety applications by adding support for coding standards AUTOSAR C++ and CERT C++

GitHub is excited to announce the release of CodeQL queries that implement the standards CERT C++ and AUTOSAR C++. These queries can aid developers looking to demonstrate ISO 26262 Part 6 process compliance. ⌘ Read more

Biden, China’s Xi ‘will talk’ says US president, weighing action on tariffs

Biden said he would be talking to Xi and was ‘in the process’ of making a decision about the tariffs imposed by the Trump administration on Chinese imports. ⌘ Read more

ProcessOne: Announcing ejabberd DEB and RPM Repositories

Today, we are happy to announce our official Linux packages repository: a source of .deb and .rpm packages for ejabberd Community Server. This repository provides a new way for the community to install and upgrade ejabberd.

All details on how to set this up are described on the dedicated website:

and bug fixes.

– Improved MQTT, MUC, and ConverseJS integration

– New installers and container

– Support Erlang/OTP 25

When upgrading from the previous version please notice: there are minor changes in SQL schemas, the included rebar and reba … ⌘ Read more

mprocs: A new way to run multiple shell applications in one shell

With a cool process list. And it works on Linux, Mac, & Windows. ⌘ Read more

“Common Table Expressions in SQL”

I’m currently working in a project that involves a lot of data processing and therefore databases. This means that we often come into contact with SQL at work and have to write an SQL query at least once a day. ⌘ Read more

ProcessOne: ejabberd 22.05

A new ejabberd release is finally here! ejabberd 22.05 includes five months of work, 200 commits, including many improvements (MQTT, MUC, PubSub, …) and bug fixes.

- Improved MQTT, MUC, and ConverseJS integration

- New installers and container

- Support Erlang/OTP 25

When upgrading from the previous version please notice: there are minor changes in SQL schemas, the included rebar and rebar3 binaries require Erlang/OTP 22 or higher, and make rel uses different paths. There are no break … ⌘ Read more

who has taken the train to crazytown the furthest? (and hasn’t suffered from nervous breakdown in the process)

Erlang Solutions: Understanding Processes for Elixir Developers

This post is for all developers who want to try Elixir or are trying their first steps in Elixir. This content is aimed at those who already have previous experience with the language.

This will help to explain one of the most important concepts in the BEAM: processes. Although Elixir is a general-purpose programming language, you don’t need to understand how the virtual machine works, but if you want to take advantage … ⌘ Read more

sometimes i think i should return to a cleaner state of mind, abandon all big never-to-be-finished projects, and write simple text-processing utilities on a raspberry pi running plan 9, improvising fractile jazz over a lonely lake and spend most of my remaining time meditating.

ProcessOne: ejabberd 21.12

This new ejabberd 21.12 release comes after five months of work, contains more than one hundred changes, many of them are major improvements or features, and several bug fixes.

When upgrading from previous versions, please notice: there’s a change in mod_register_web behaviour, and PosgreSQL database, please take a look if they affect your installation.

A more detailed explanation of those … ⌘ Read more

Ubuntu maker, Canonical, announces new employment interview process

Now with twice the number of essay questions. ⌘ Read more

I didn’t get around to blogging about the fact that Miniflux recently got a new version. With it, if an entry doesn’t have a title, it finally shows a snippet of the content instead of just the URL as the title. A great new feature if you follow a lot of micro blogs. Regarding micro-blogs, I’m also in the process of reading Manton Reece’s book draft. ⌘ Read more

Monal IM: Insights into Monal Development

TLDR:

_Info: Monal will stop support for iOS 12, iOS 13 and macOS Catalina!

We are searching for a SwiftUI developer.

We need a new simplified website.

With better continuous funding, our push servers will move from the US to Europe.

We have a new support mail: info@monal-im.org_

Two years ago we decided to rewrite the Monal app almost entirely and improve it gradually in the process, instead of creating another XMPP Client for iOS and macOS. We suc … ⌘ Read more

** 2022-02-24 feature/6.0 Android test plan **

OverviewWill test the upgrade path from a known state to new version to ensure that settings and app state are maintained during upgrade process.

V. 6.0 of libro.fm android app introduces an entirely new local database. This testing is focused on ensuring that local data remains intact between versions.

NotesThis evening I was mostly focused on setting up a successful build of feature/6.0 on my test device or the emulator. So far, no dice. My next … ⌘ Read more

@screem@yarn.yarnpods.com we have had to really shorten our process. I think long interviews were scaring off talent.

Hi, today I’m working on Images processing and some Web stuff :)

Prosodical Thoughts: Prosody 0.11.12 released

We are pleased to announce a new minor release from our stable branch.

This is a security release that addresses a denial-of-service vulnerability in

Prosody’s mod_websocket. For more information, refer to the

20220113 advisory.

A summary of changes in this release:

Security- util.xml: Do not allow doctypes, comments or processing instructions

As usual, download instructions for many platforms can be f … ⌘ Read more

How we ship GitHub Mobile every week

Learn how the GitHub Mobile Team automates their release process with GitHub Actions. ⌘ Read more

Peter Saint-Andre: Cultivating Curiosity

In my drive to hold fewer opinions (or at least hold them less strongly), for a while I tried to cultivate a healthy skepticism about things I believe - for instance, by attempting to question one opinion every week. This didn’t work, at least for me, because it felt too negative. Instead, now I’m working to cultivate curiosity. Here are a few thoughts on the process…. ⌘ Read more

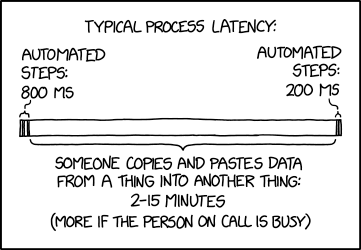

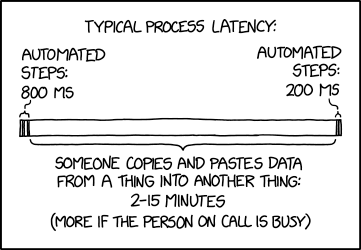

Latency

⌘ Read more

⌘ Read more

Latency

⌘ Read more

⌘ Read more

ProcessOne: ejabberd 21.12

This new ejabberd 21.12 release comes after five months of work, contains more than one hundred changes, many of them are major improvements or features, and several bug fixes.

When upgrading from previous versions, please notice: there’s a change in mod_register_web behaviour, and PosgreSQL database, please take a look if they affect your installation.

A more detailed expla … ⌘ Read more

ProcessOne: ejabberd 21.12

This new ejabberd 21.12 release comes after five months of work, contains more than one hundred changes, many of them are major improvements or features, and several bug fixes.

When upgrading from previous versions, please notice: there’s a change in mod_register_web behaviour, and PosgreSQL database, please take a look if they affect your installation.

A more detailed expla … ⌘ Read more

ProcessOne: ejabberd 21.12

This new ejabberd 21.12 release comes after five months of work, contains more than one hundred changes, many of them are major improvements or features, and several bug fixes.

When upgrading from previous versions, please notice: there’s a change in mod_register_web behaviour, and PosgreSQL database, please take a look if they affect your installation.

A more detailed expla … ⌘ Read more

Building Docker images in Drone CI using Docker-in-Docker

This evening I tried to improve the build process of GoBlog. GoBlog gets built using Drone CI and Docker. The problem was that two image variants are to be built, one based on the other, and the whole thing always took quite a long time. ⌘ Read more

Erlang Solutions: Blockchain Tech Deep Dive ¼

INTRODUCTIONBlockchain technology is transforming nearly every industry, whether it be banking, government, fashion or logistics. The benefits of using blockchain are substantial – businesses can lower transaction costs, free up capital, speed up processes, and enhance security and trust. So it’s no surprise that more and more companies and developers are interested in working with the technology and leveraging its potential than ev … ⌘ Read more

Blue-teaming for Exiv2, part 1: creating a security advisory process

This blog post is the first in a series about hardening the security of the Exiv2 project. My goal is to share tips that will help you harden the security of your own project. ⌘ Read more

@movq@www.uninformativ.de What do you think about this?

diff –git a/jenny b/jenny

index b47c78e..20cf659 100755

— a/jenny

+++ b/jenny

@@ -278,7 +278,8 @@ def prefill_for(email, reply_to_this, self_mentions):

def process_feed(config, nick, url, content, lasttwt):

nick_address, nick_desc = decide_nick(content, nick)

url_for_hash = decide_url_for_hash(content, url)

new_lasttwt = parse(‘1800-01-01T12:00:00+00:00’).timestamp()

# new_lasttwt = parse(‘1800-01-01T12:00:00+00:00’).timestamp()

new_lasttwt = None

for line in twt_lines_from_content(content):

res = twt_line_to_mail(@@ -296,7 +297,7 @@ def process_feed(config, nick, url, content, lasttwt):

twt_stamp = twt_date.timestamp() if lasttwt is not None and lasttwt >= twt_stamp: continueif twt_stamp > new_lasttwt:

if not new_lasttwt or twt_stamp > new_lasttwt:

new_lasttwt = twt_stamp mailname_new = join(config['maildir_target'], 'new', twt_hash)

Don’t miss step 0 (I should have made this a separate point): having a meta header promising appending twts with strictly monotonically increasing timestamps.

(Also, I’d first like to see the pagination thingy implemented.)

In jenny I would like to see “don’t process previously fetched twts” AKA “Allow the user to archive/delete old twts” feature implemented ;-)

My thoughts about range requests

Additionally to pagination also range request should be used to reduce traffic.

I understand that there are corner cases making this a complicated matter.

I would like to see a meta header saying that the given twtxt is append only with increasing timestamps so that a simple strategy can detect valid content fetched per range request.

- read meta part per range request

- read last fetched twt at expected range (as known from last fetch)

- if fetched content starts with expected twt then process rest of data

- if fetched content doesn’t start with expected twt discard all and fall back to fetching whole twtxt

Pagination (e.g. archiving old content in a different file) will lead to point 4.

Of course especially pods should support range requests, correct @prologic@twtxt.net?

@laz@tt.vltra.plus

How do you handle upgrades like this on your pod? Do you keep a diff of your customisations, or is it all a manual process?

@movq@www.uninformativ.de I am getting this when I run it on cron (extra lines in between becuase otherwise jenny will make them a mash):

Traceback (most recent call last):

File “/home/quark/jenny/jenny”, line 565, in

if not retrieve_all(config):

File “/home/quark/jenny/jenny”, line 373, in retrieve_all

refresh_self(config)

File “/home/quark/jenny/jenny”, line 294, in refresh_self

process_feed(config, config[‘self_nick’], config[‘self_url’], content)

File “/home/quark/jenny/jenny”, line 280, in process_feed

fp.write(mail_body)

File “/usr/lib/python3.8/encodings/iso8859_15.py”, line 19, in encode

return codecs.charmap_encode(input,self.errors,encoding_table)[0]

UnicodeEncodeError: ‘charmap’ codec can’t encode character ‘\U0001f4e3’ in position 31: character maps to

@prologic@twtxt.net Excellent, nothing broke. I think what happened was you replied to a twt that I was in the process of editing.

#event Tomorrow, Saturday October 2nd, I’m gonna be hosting a workshop at Processing Community Day CPH about Live Coding Visuals in Improviz. Only 5 spots left, so sign up now at: https://pcdcph.com

An analysis on developer-security researcher interactions in the vulnerability disclosure process

We put out a call to open source developers and security researchers to talk about the security vulnerability disclosure process. Here’s what we found. ⌘ Read more

ProcessOne: ejabberd 21.07 ⌘ Read more…

When tragedy strikes unexpectedly we cannot just go on as if nothing happened. Our minds need to be given time to deal with the blow. So it is necessary to pause and allow ourselves to process and recover.

ProcessOne: Install and configure MariaDB with ejabberd ⌘ Read more…

https://github.com/learnbyexample/Command-line-text-processing/blob/master/ruby_one_liners.md oneliner ruby

ProcessOne: Install ejabberd on Windows 10 using Docker Desktop ⌘ Read more…

@movq@www.uninformativ.de “Random thought: Would be great if you could do for i in ...; do something "$i" & done ; wait in a Shell script, but with the Shell only spawning one process per CPU.” -> Interesting which annoyances stay in the back of the head – I’d never articulated this, but it’s absolutely true that this would be great.

ProcessOne: ejabberd 21.04 ⌘ Read more…

ProcessOne: ejabberd 21.01 ⌘ Read more…

ProcessOne: Install ejabberd on Windows 10 using Docker Desktop ⌘ Read more…

ProcessOne: Install ejabberd on Windows 7 using Docker Toolbox ⌘ Read more…

ProcessOne: Install ejabberd on Windows 10 using Docker Desktop ⌘ Read more…

ProcessOne: Install ejabberd on Windows 10 using Docker Desktop ⌘ Read more…